![The 4 Major Corporate Fears About AI [and How Arkane Crushes Them]](https://arkanecloud.com/wp-content/uploads/elementor/thumbs/image-fears-r83x02fmwkg0873grkx2f5jvdsy2k1b00h5jqz1vi0.png)

Visualization and Real-time Rendering with RTX A5000

Real-time Rendering and Visualization

The digital landscape is continually reshaped by the relentless pursuit of more lifelike and efficient rendering technologies. At the core of this evolution is the need for real-time rendering and visualization, processes that have revolutionized industries from gaming to architecture.

Real-time rendering, once constrained by technological limitations, has now transcended into a realm where instantaneous visual feedback is not just a luxury but a necessity. The integration of advanced GPUs and rendering technologies has enabled artists and designers to interact with their creations in real-time, fostering an environment where ideas can evolve dynamically during the creative process. This immediate responsiveness is crucial in various fields, particularly in those requiring quick iterations and decision-making based on visual data.

The advent of technologies like ray tracing has marked a significant milestone in rendering. Traditionally a computationally intensive process limited to offline rendering, ray tracing is now feasible in real time, thanks to dedicated hardware acceleration and advanced GPUs. This development brings unparalleled realism to visual outputs, allowing for reflections, shadows, and light interactions that closely mimic real-world physics.

Simultaneously, the rise of AI in rendering has opened new avenues for enhanced visual quality and efficiency. AI algorithms, particularly neural networks and deep learning techniques, are redefining the rendering process. These AI-driven methods can intelligently predict missing pixels, reduce noise, and optimize rendering settings, leading to faster rendering times without compromising the visual fidelity.

Moreover, cloud computing has revolutionized the rendering landscape, democratizing access to high-end rendering capabilities. Distributed cloud rendering utilizes a network of servers, providing scalable resources to handle extensive projects without necessitating significant hardware investments. This approach has significantly expedited rendering processes, allowing artists and studios to tap into vast computing resources on demand.

As we delve deeper into the capabilities of the RTX A5000, we will explore how it harnesses these advancements, enabling professionals to push the boundaries of what’s possible in real-time rendering and visualization.

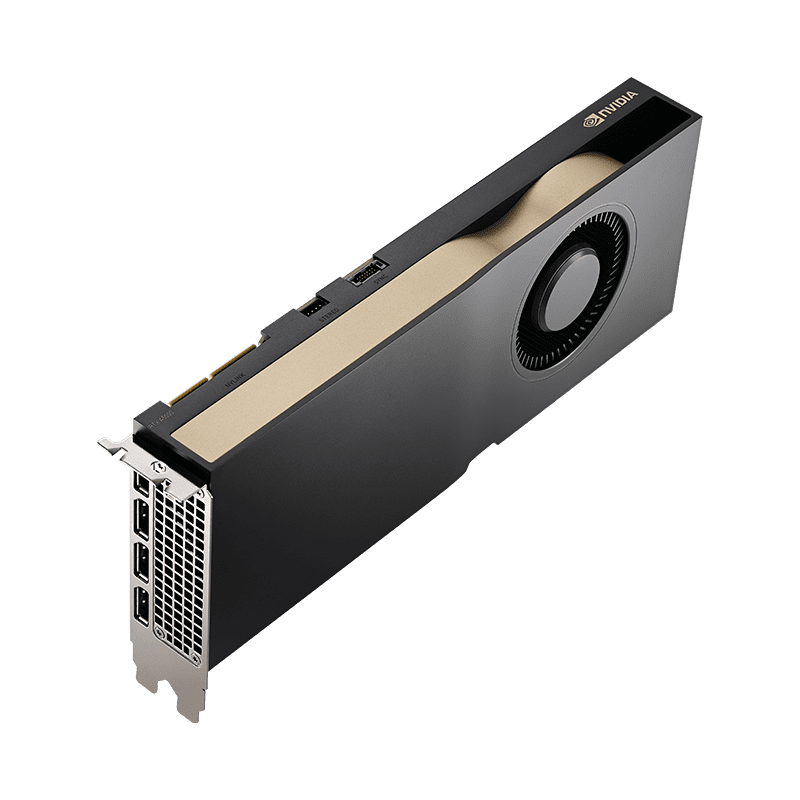

Overview of RTX A5000

The NVIDIA RTX A5000 stands as a formidable force in the realm of graphics processing, embodying a harmonious blend of power, performance, and precision. At its core, this powerhouse is fueled by the NVIDIA Ampere Architecture, boasting a significant leap in performance with up to 2.5X single-precision floating-point (FP32) performance compared to its predecessor. This marks a pivotal shift in accelerating graphics workflows, enabling professionals to handle increasingly complex and intricate designs with ease.

Equipped with second-generation RT Cores, the RTX A5000 redefines the boundaries of visual fidelity. It offers hardware-accelerated motion blur and up to twice the ray-tracing performance of previous models. This advancement is crucial for producing more accurate and lifelike renders rapidly, catering to the growing demand for realism in digital content.

In the burgeoning field of AI and data science, the RTX A5000 emerges as a game-changer. Its third-generation Tensor Cores provide up to 10X faster training performance, enabling more efficient model training and AI-driven enhancements in various applications. This feature unlocks new possibilities in intelligent rendering and computational workflows, streamlining processes that were once time-intensive.

Addressing the challenges of memory-intensive workloads, the RTX A5000 comes equipped with 24GB of GDDR6 memory, complete with ECC (Error-Correcting Code). This vast memory capacity is pivotal for handling tasks ranging from virtual production to complex engineering simulations. It ensures that professionals can work on large-scale projects without compromising on speed or quality.

The graphics card is also virtualization-ready, supporting the transformation of personal workstations into multiple high-performance virtual platforms. This flexibility is essential in today’s diverse and remote working environments. Additionally, the inclusion of third-generation NVIDIA NVLink allows for the scaling of memory and performance across multiple GPUs, addressing the needs of larger datasets and more intricate models.

Complementing its robust performance features, the RTX A5000 prides itself on its power efficiency. Its dual-slot, energy-efficient design is 2.5 times more power-efficient than previous generations, catering to a broad spectrum of workstation configurations. This attention to power efficiency is not just an operational advantage but also an environmental consideration, aligning with the growing emphasis on sustainable technology practices.

In summary, the RTX A5000 is not just a graphics card; it is a testament to NVIDIA’s commitment to pushing the frontiers of graphics technology, offering a versatile and powerful tool for professionals across various industries.

Enhanced Visual Accuracy with RTX A5000

The RTX A5000, equipped with second-generation RT Cores, ushers in a new era of rendering, marked by enhanced visual accuracy and the ability to handle complex motion dynamics in real-time. This capability is pivotal in rendering scenarios where motion blur—a common phenomenon in the real world—is present. Traditional rendering methods often struggled to accurately depict motion blur, particularly in cases of partial occlusion, where a moving object partially blocks the view of objects behind it.

The hardware-accelerated motion blur capability of the RTX A5000 addresses this challenge head-on. It allows for the intricate portrayal of blurred regions, which naturally reveal information about hidden background areas. This effect is particularly notable in scenes where objects are in motion, causing their silhouette to blur both outward and inward, thereby creating a semi-transparent appearance around their edges.

This advanced rendering technique combines post-process image filtering with hardware-accelerated ray tracing. Each frame is processed to retrieve background information for inner blur regions, which were traditionally difficult to render accurately. By advancing rays recursively into the scene, the RTX A5000 uncovers the true colors and details of the background, integrating them seamlessly with the foreground. This not only enhances the accuracy of the render but also maintains interactive frame rates, crucial for real-time applications.

Moreover, the RTX A5000’s approach involves gathering samples from a heuristic range of nearby pixels, enabling a more nuanced and precise rendering of motion blur. This process ensures that the transition between blurred and sharp regions is smooth and natural, avoiding the common pitfalls of post-processing techniques that often led to inconsistencies and visual artifacts.

In essence, the RTX A5000 revolutionizes the way motion blur is rendered. By leveraging the power of ray tracing and advanced hardware acceleration, it provides artists and developers with the tools to create more realistic and visually accurate scenes, particularly in dynamic environments where motion and speed are integral elements.

AI and Data Science in Rendering with RTX A5000

The integration of AI and data science into rendering processes has opened new frontiers in digital creation, and the NVIDIA RTX A5000 GPU stands at the vanguard of this technological revolution. With its advanced features, the RTX A5000 is not just a graphics powerhouse but also a beacon of innovation in AI-driven rendering and data science applications.

The heart of the RTX A5000’s AI capabilities lies in its third-generation Tensor Cores. These cores are engineered to boost AI and data science model training, delivering performance enhancements up to 10 times faster than the previous generation. This significant leap in performance is crucial for tasks that require real-time AI processing, such as predictive analytics in data-intensive scenarios and deep learning applications. The advanced Tensor Cores facilitate complex computations integral to AI algorithms, making the RTX A5000 an ideal choice for professionals working with AI-enhanced rendering techniques or developing AI-driven applications.

Performance benchmarks conducted for TensorFlow on the RTX A5000 further exemplify its capabilities in AI and deep learning. Tests on networks like ResNet50, ResNet152, Inception v3, and Googlenet show that the RTX A5000 exhibits near-linear scaling up to 8 GPUs, showcasing its ability to handle massive data sets and complex AI models efficiently. This scalability is a testament to the GPU’s prowess in handling data-intensive tasks that form the backbone of modern AI and data science projects.

Moreover, the RTX A5000 is not just about raw power but also about efficiency and compatibility. Its expandability up to 48GB of memory using NVIDIA NVLink® and support for PCIe Gen 4 ensures that data transfers for AI, data science, and 3D modeling tasks are swift and seamless. This compatibility extends to virtual workstation software, allowing multiple high-performance virtual instances, vital for collaborative AI development environments.

In summary, the RTX A5000 GPU, with its advanced Tensor Cores, scalability, and compatibility, redefines the landscape of AI and data science in rendering. It empowers creators and data scientists to push the boundaries of what’s possible, blending the realms of artistic vision and AI innovation into a cohesive and potent toolkit.

Handling Memory-Intensive Workloads with RTX A5000

In the realm of high-end GPU servers, handling memory-intensive workloads is a critical benchmark for performance. The NVIDIA RTX A5000, with its 24 GB of GDDR6 memory, stands out as a titan in this regard, offering a substantial leap in memory capacity and speed compared to its predecessors. This advancement is not just a quantitative increase in memory but a qualitative enhancement in handling data-rich tasks, essential in various professional applications, from virtual production to complex engineering simulations.

The integration of GDDR6 memory in the RTX A5000 is a game-changer for graphics-intensive tasks. Samsung’s 24Gbps GDDR6 DRAM, which represents the cutting edge in memory technology, offers 30% faster speeds compared to the previous 18Gbps product. This innovation ensures that when integrated into high-end graphics cards like the RTX A5000, the memory can process massive data sets at unprecedented speeds, essential for AI and metaverse applications that demand real-time data processing.

Moreover, the RTX A5000’s memory is equipped with Error-Correcting Code (ECC), ensuring data integrity and reliability for mission-critical applications. This feature is particularly important in fields where computational accuracy is non-negotiable, such as scientific research, financial modeling, and high-precision engineering simulations.

Another standout feature of the RTX A5000 is its compatibility with NVIDIA NVLink, which offers a significantly faster alternative for multi-GPU systems compared to traditional PCIe-based solutions. NVLink enables the scaling of memory and performance across multiple GPUs, effectively doubling the memory capacity to 48GB when two RTX A5000s are connected. This scalability is crucial for tackling larger datasets, models, and scenes, which are increasingly common in advanced visual computing workloads.

In conclusion, the RTX A5000’s 24GB of GDDR6 memory, coupled with features like ECC and NVLink compatibility, positions this GPU as an ideal solution for handling memory-intensive workloads. This capability is indispensable in a wide range of professional applications, making the RTX A5000 a pivotal tool in the arsenal of any professional working with data-rich environments.

Newsletter

You Do Not Want to Miss Out!

Step into the Future of Model Deployment. Join Us and Stay Ahead of the Curve!